Empowering you to make informed decisions

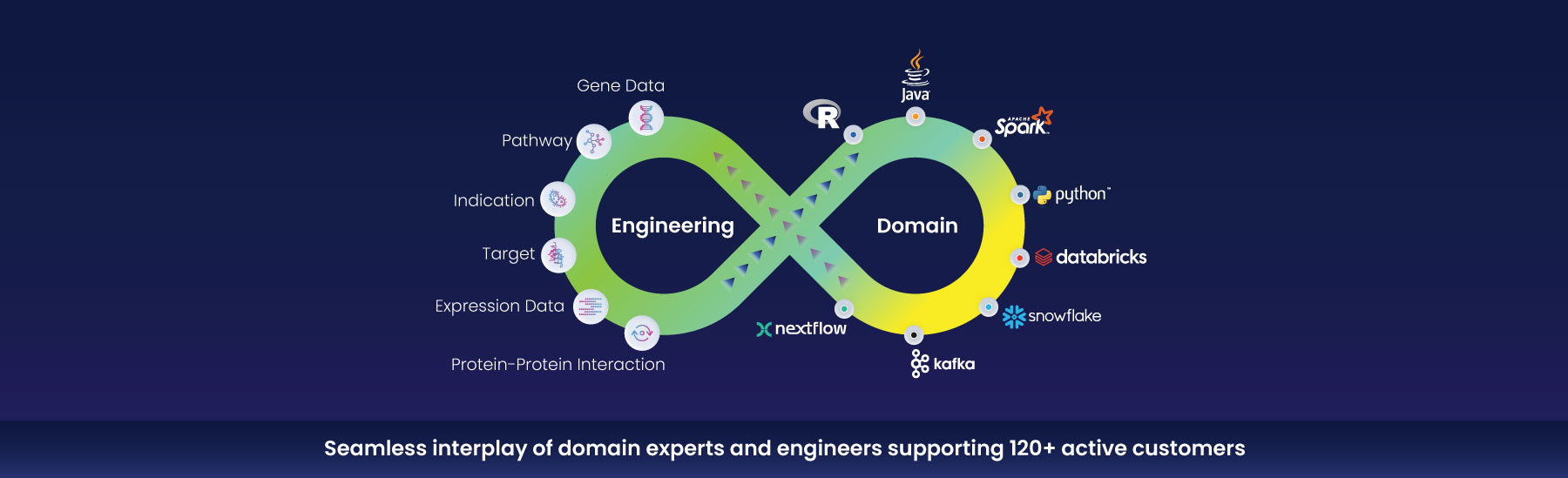

Growing R&D organizations face data engineering challenges like processing raw data, building the right infra to consolidate & enrich a variety of data repositories, and handling large-scale data processing. Our experts handle a wide variety of data types using the latest programming languages and bioinformatic workflow frameworks. We complement your teams to develop novel algorithms and methods to advance discovery and translational research programs.

Our services

We operate a science-first approach to data engineering for R&D-IT in pharma. Our domain experts and experienced engineers deliver a focused, end-to-end service catalog.

We interface science and technology to support your entire digital journey from strategy definition, through delivery, to ongoing support. Our services include:

Data modeling

Process automation

Workflow orchestration

Managed services

Our domain + tech approach

Case study

Enterprise-wide marketplace development

The client sought to develop a centralized marketplace that is

- hosted on a data lake

- with metadata standards to facilitate search functionalities for bench scientists, and

- embedded support for ongoing user management

- audit-friendly infrastructure

There are around 10,000 scientists in the client’s R&D operation, but very little data on medicine development and trials was shared between them. There were parts of it that had been done using traditional data warehousing in the past, with attempts to structure and organize data using technologies such as Oracle and Teradata.

We pursued an accelerated timeline by leveraging parallel workflows for data curation, data management, application development, and GxP documentation development.

It included:

- Kafka, a distributed data streaming software, that can help achieve high throughput, minimal latency, high computation power, and can handle large volumes of data without any perceptible lag in performance

- Airflow was also used for workflow management as a data orchestration tool to write, schedule, and keep an eye on the workflows for processing data.

Using this, we extracted data from public and legacy data sets relevant to translational R&D. We then structured and engineered the data, maintaining metadata and required relationships via semantic modeling and knowledge graphs. We designed, developed, tested, and deployed the marketplace application, then provided GxP documentation as the client required.

With our help, the client migrated over 29 million study records from the legacy source to the new application.

Why Excelra

Unison of data, deep domain, and tech

We’re data evangelists, unapologetically committed to activating the value of data in your R&D activities.

Aligned to your environment

Our team of 150+ data engineers uses the most advanced tools and technology on the market, to provide services that are aligned with your tech environment.

End-to-end data lifecycle management

We help manage your data throughout its lifecycle, so it’s optimized for maximum data security and insights.

Ready to get more from data?

Tell us about your objectives. We’ll help get you there.

"*" indicates required fields